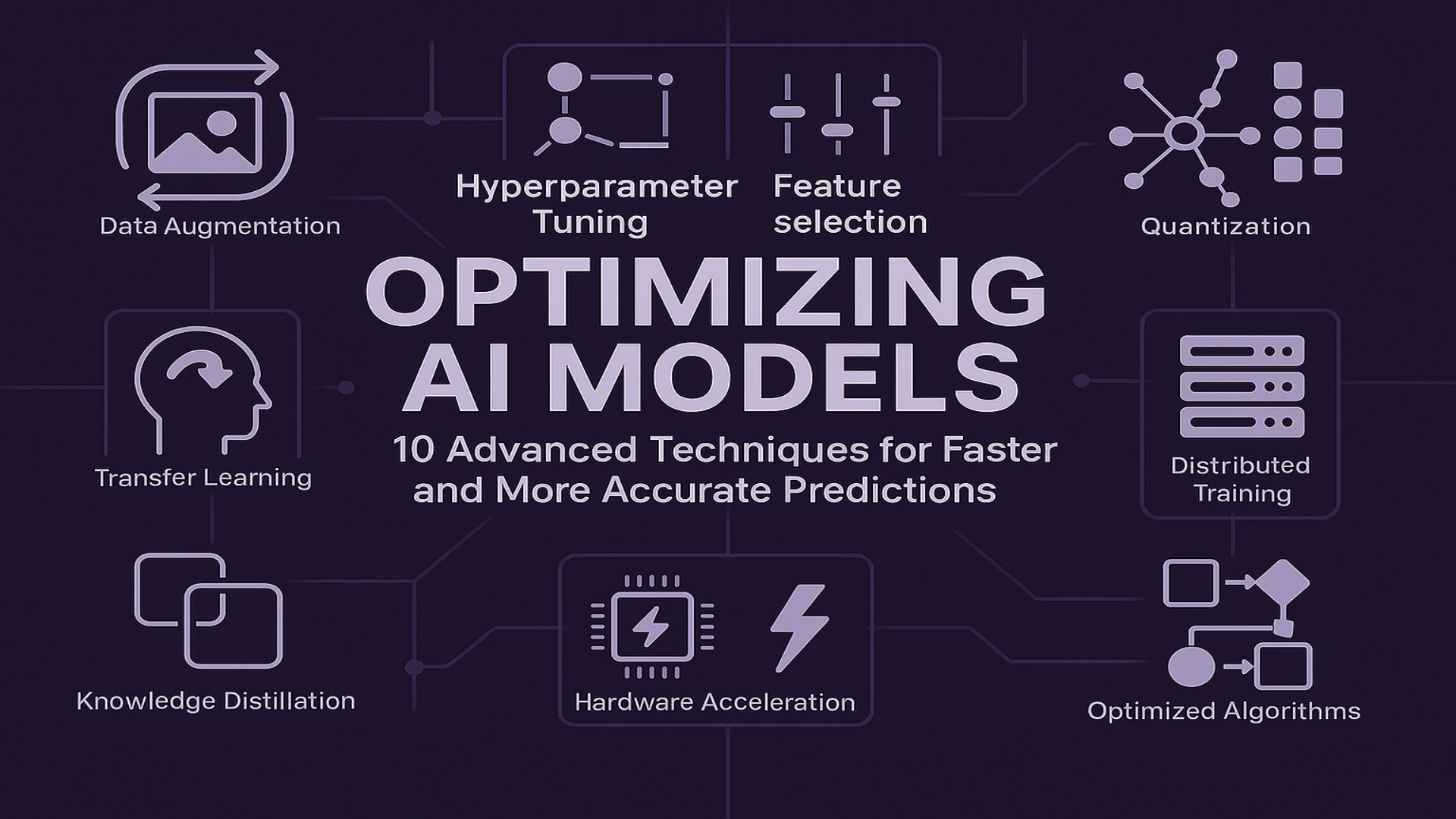

In the relentless pursuit of artificial intelligence advancement, the efficiency and accuracy of AI models stand as pivotal factors. To truly harness the power of machine learning, developers and researchers must delve into the art and science of model optimization. This article will provide 10 advanced techniques that will significantly enhance the efficiency and precision of your machine learning endeavors.

In the ever-evolving landscape of artificial intelligence, the quest for faster and more accurate predictions is paramount. Consequently, optimizing AI models has become a critical focus for developers and researchers alike. But how exactly do we achieve this? Let’s explore ten advanced techniques that can significantly enhance the efficiency and precision of your machine learning endeavors.

1. Data Augmentation: Enriching Datasets for Robust Models

Data augmentation plays a crucial role in enhancing AI performance. Simply put, this technique involves generating new training data from existing data by applying transformations like rotations, flips, and zooms. As a result, ML models learn to generalize better, reducing overfitting and improving robustness. For example, in image recognition, rotating images of objects allows a model to recognize those objects from different angles.

Pros and Cons: Data augmentation is highly effective at increasing dataset diversity and model robustness, but it may also introduce computational overhead and require careful tuning to avoid unrealistic data variations.

2. Feature Selection: Streamlining Inputs for Efficiency

Feature selection is essential for reducing the dimensionality of your dataset. By identifying and selecting the most relevant features, you can simplify the AI models and accelerate training times, leading to more efficient and accurate predictions. For instance, in predicting housing prices, selecting relevant features like square footage and number of bedrooms—and removing less relevant features—can improve model performance.

Pros and Cons: This technique helps in reducing complexity and speeding up training, yet it can risk discarding potentially useful information if not executed with domain expertise.

3. Hyperparameter Tuning: Fine-Tuning for Optimal Performance

Hyperparameter tuning allows us to fine-tune the parameters that control the learning process. Techniques such as grid search and Bayesian optimisation systematically explore the hyperparameter space. Therefore, optimal configurations are found, which enhance model optimization technique and overall performance.

Pros and Cons: While hyperparameter tuning can significantly boost performance by finding the best settings, it can be time-consuming and computationally expensive, especially with large search spaces.

4. Model Pruning: Reducing Redundancy for Speed

Model pruning focuses on removing redundant or less important weights from the neural network. Consequently, the model becomes smaller and faster while maintaining its predictive accuracy, making it a valuable step in the optimization process.

Pros and Cons: Pruning reduces model size and increases speed, but overly aggressive pruning may lead to loss of essential information, potentially degrading the model’s accuracy.

5. Quantization: Compressing Models for Resource Efficiency

Quantization involves reducing the precision of the model’s weights and activations. This process decreases the memory footprint and computational requirements of the AI models, which is particularly beneficial when deploying models on resource-constrained devices, such as mobile phones.

Pros and Cons: Quantization can drastically reduce resource consumption and increase deployment flexibility, yet it may slightly reduce model precision if not carefully managed.

6. Knowledge Distillation: Transferring Expertise for Compact Models

Knowledge distillation allows us to transfer the expertise from a large, complex model (teacher) to a smaller, more efficient model (student). In doing so, the student model learns to mimic the teacher’s behavior, achieving comparable accuracy with fewer parameters.

Pros and Cons: This method enables smaller models to achieve high performance, but the process depends heavily on the quality of the teacher model and may involve complex training setups.

7. Transfer Learning: Leveraging Pre-trained Models for Faster Learning

Transfer learning leverages pre-trained models on large datasets. By fine-tuning these models on a specific task, you can significantly reduce training time and improve performance. This method is a highly effective model optimization technique for many real-world applications, such as medical image analysis, where pre-trained models can be fine-tuned to detect specific diseases.

Pros and Cons: Transfer learning reduces training time and can enhance performance on limited data, though its effectiveness relies on the relevance of the pre-trained model to the target task.

8. Distributed Training: Scaling Up for Large Datasets

Distributed training allows you to train ML models on multiple machines or GPUs simultaneously. This approach significantly accelerates the training process for large datasets and complex models, making it an indispensable technique for scaling AI projects.

Pros and Cons: Distributed training provides major speed advantages and scalability, but it introduces complexity in synchronizing processes and requires robust infrastructure.

9. Hardware Acceleration: Utilizing Specialized Processors

Hardware acceleration involves the use of specialized processors like GPUs and TPUs, which are designed to handle the parallel computations required for AI models. This results in significant speedups during both training and inference phases.

Pros and Cons: Specialized processors offer impressive performance boosts, yet the initial cost and setup complexity can be barriers for smaller projects or organizations with limited resources.

10. Optimized Algorithms: Improving Underlying Computational Efficiency

Finally, employing optimized algorithms is key. By selecting and implementing algorithms that are computationally efficient, you can reduce processing times without compromising accuracy. This is a core aspect of boosting AI model efficiency. For example, using adaptive learning rate algorithms like Adam can significantly accelerate convergence compared to standard gradient descent.

Additionally, leveraging optimized libraries for matrix operations, like BLAS or cuBLAS, can drastically improve the speed of neural network computations. For signal processing tasks, utilizing Fast Fourier Transforms (FFTs) can offer substantial performance gains over traditional methods.

Pros and Cons: Optimized algorithms can greatly enhance speed and efficiency, yet they may require specialized knowledge and sometimes involve trade-offs in terms of algorithm complexity or adaptability to different data types.

Model Evaluation Considerations

It’s also important to note that the effectiveness of these optimization techniques is measured through proper model evaluation. Key metrics such as accuracy, precision, recall, and F1-score—along with computational efficiency metrics like latency and throughput—should be tracked throughout the optimization process.

Pros and Cons: Rigorous evaluation ensures that optimizations lead to genuine improvements, but it can also require significant effort to set up comprehensive testing environments and track multiple performance metrics.

In conclusion, these ten advanced techniques offer a comprehensive toolkit for optimizing AI models. By implementing these strategies, developers and researchers can achieve faster, more accurate predictions, ultimately driving innovation and efficiency across various applications. While each technique has its own set of benefits and challenges, the key lies in selecting the right combination that aligns with your project’s goals. To further your AI optimization journey, explore our AI optimization services and learn more about implementing these techniques in your projects.